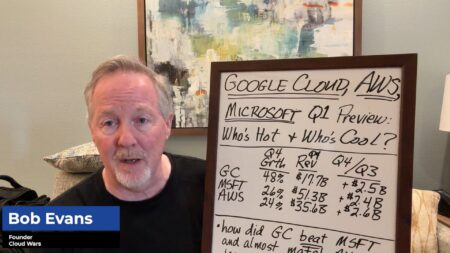

With advancing partnerships and increased interoperability, it’s easy to see Microsoft as an enterprise AI gateway rather than a model developer. However, both statements are true, and Microsoft has been slowly building an arsenal of AI models that easily compete with the most popular alternatives.

Now, the company has announced details and updates on three newly released Microsoft AI (MAI) models spanning transcription, voice, and image generation. In a blog post introducing the model series, MAI CEO Mustafa Suleyman explained how they echoed the company’s ongoing commitment to developing human-centric AI tools:

“At Microsoft AI, we’re building Humanist AI. We have a distinct view when creating our AI models — putting humans at the center, optimizing for how people actually communicate, training for practical use.” So, what’s new?

MAI-Transcribe-1

MAI-Transcribe-1 enables speech-to-text transcription for the world’s 25 most-used languages. Microsoft says that regarding batch transcription speed, MAI-Transcribe-1 is 2.5x faster than its Microsoft Azure Fast offering and is available through Microsoft Foundry, “at the best price-performance of any large cloud provider.”

The model also has a lower Word Error Rate than other leading systems, including GPT-Transcribe, Scribe v2, Gemini 3.1 Flash, and Whisper-large-v3. Microsoft cites video captioning, meeting transcription, accessibility tools, call analysis, content design workflows, and driving voice agents as the leading use cases for the model.

MAI-Voice-1

MAI-Voice-1 is Microsoft’s best voice generation model, and it’s now being rolled out to developers via Foundry and MAI Playground. The model, first announced in August 2025, now allows users to create a custom voice in Foundry from just a short snippet of audio.

MAI-Voice-1 can generate a minute of audio per second and is highly cost-efficient, starting at $22 per 1 million characters.

MAI-Image-2

Released in mid-March, MAI-Image-2 was developed in collaboration with creatives in photography and design. It features exceptional clarity, accurate skin tone replication, and natural lighting effects. The model is available through Foundry and Copilot, and Microsoft is currently rolling it out to Bing and PowerPoint.

“MAI-Image-2 is a genuine game-changer,” said Rob Reilly, Global Chief Creative Officer, WPP, whose company is among the first to scale the model at an enterprise level. “It’s a platform that not only responds to the intricate nuance of creative direction, but deeply respects the sheer craft involved in generating real-world, campaign-ready images.”

Closing Thoughts

When MAI first revealed its intentions to launch an in-house model family in September last year, I commented in an article titled “OpenAI and Microsoft Drift Apart as MAI-1 Foundation Model Debuts” that:

“…until now, the company has primarily depended on the capabilities of OpenAI’s large language models (LLMs) to power its next-generation AI tools and platforms. This is about to change, as the company announces a first pair of in-house models developed by the Microsoft AI (MAI) team: MAI-Voice-1 and MAI-1-preview.”

Just six months later, the news isn’t that Microsoft’s advanced models are representing a gulf between it and OpenAI, but that they have the potential to shake up the entire industry.

While Microsoft enables widespread model access to its customers, the introduction of new models and capabilities that match and sometimes exceed those of its competitors is pushing the company, in the AI stakes at least, to deliver an AI sovereign platform where customers can choose to use Microsoft products and services driven by a Microsoft AI engine.

Ask Cloud Wars AI Agent about this analysis