Have you heard of the Robots Exclusion Protocol, commonly referred to as robots.txt? Unless you’re involved with the publishing industry or a seasoned web developer, it’s likely this somewhat non-descript standard is new to you.

Introduced during the internet boom in the mid-1990s to help stem the onslaught of web crawlers, the robots.txt protocol became a critical tool for publishers, enabling them to specify — using “allow” and “disallow” commands — which URLs on their site can be accessed by a crawler or spider.

Now, with the onset of GenAI, companies have begun using web content for model training and summarization functions. However, recent investigations have found that a number of these companies are failing to follow the robot.txt protocol and instead bypassing it to scrape data without the consent of content creators.

Major Offender

A recent investigation by Wired focused on the practices of Perplexity, an AI search startup with notable investors including Jeff Bezos and NVIDIA. The investigation found that Perplexity was likely ignoring the robots.txt protocol and scraping sections of websites that had been designated with disallow status.

Wired found that Perplexity was carrying out this practice on its website, as well as others owned by its parent publisher Condé Nast. Beyond this, the investigation found that some of the output from Perplexity was skewed. As well as failing to attribute ownership of the content, the chatbot was also found to, at times, summarize stories inaccurately.

“In theory, Perplexity’s chatbot shouldn’t be able to summarize WIRED articles, because our engineers have blocked its crawler via our robots.txt file since earlier this year,” says Wired’s Dhruv Mehrotra in his report.

However, Wired isn’t the only publication aiming at Perplexity. Forbes has also publicly outed the company, asserting it lifted an article belonging to Forbes and posted it as its own with no credit to the Forbes journalists that scribed the original content. That said, Perplexity isn’t the only offender.

Growing Concern

Reuters recently reported on the findings of the content licensing platform TollBit. Despite not naming the companies specifically, TollBit reported that its analysis found numerous AI agents had been bypassing the robots.txt protocol.

“What this means in practical terms is that AI agents from multiple sources (not just one company) are opting to bypass the robots.txt protocol to retrieve content from sites,” TollBit told Reuters. “The more publisher logs we ingest, the more this pattern emerges.”

Governance Is Critical

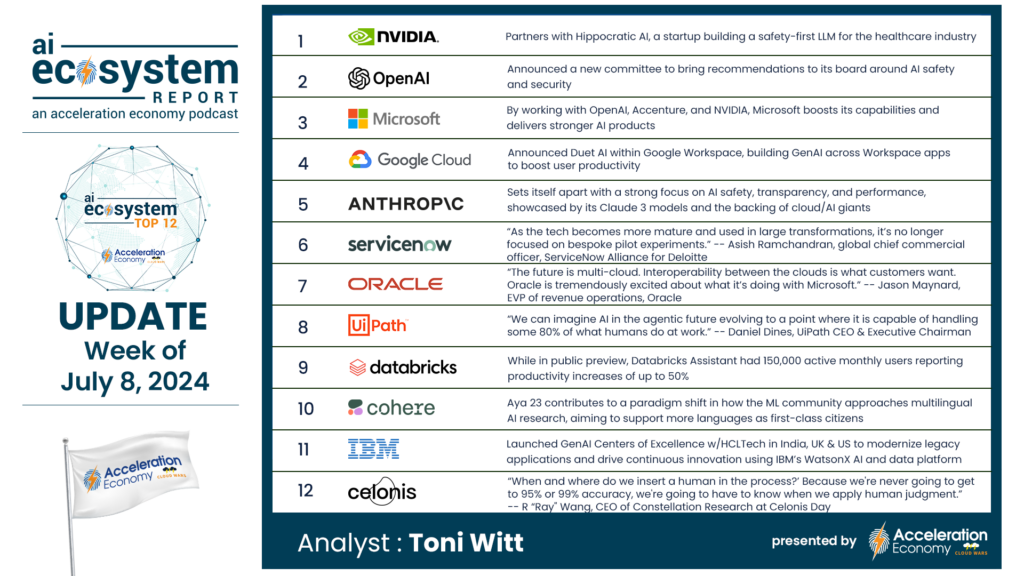

The quest for ethical, trusted AI has become a cornerstone of companies using AI technologies to develop new products and services. We regularly report on the efforts of companies to establish AI governance protocols, such as Salesforce’s joint effort with NIST and Google Cloud’s Kyndryl partnership driven by responsible GenAI deployment.

While there are yet to be universal standards for AI governance in place, work is underway. There’s limited federal legislation in the US but as yet no comprehensive framework. The EU has put in place the first major law governing AI use. And of course, within the business community, there are numerous internal compliance frameworks and AI governance groups, such as the AI Alliance, launched by IBM and Meta back in 2023.

However, there’s no unified legislative approach mandating responsible AI governance, or how offenders that don’t comply are punished. As well as proprietary LLMs, Perplexity uses other off-the-shelf models from companies including OpenAI and Anthropic.

To protect the intellectual property rights of publishers, we should see laws — in the absence of ethical behavior — that makes it illegal for organizations to utilize LLMs for nefarious practices.